My research focuses on building trustworthy, safe, and performant AI for autonomous physical systems. I develop principled methods that bring together learning and formal safety guarantees — spanning foundational models for Physical AI, safe offline reinforcement learning, generative policy synthesis, and agentic AI. Below are the key research themes I work on.

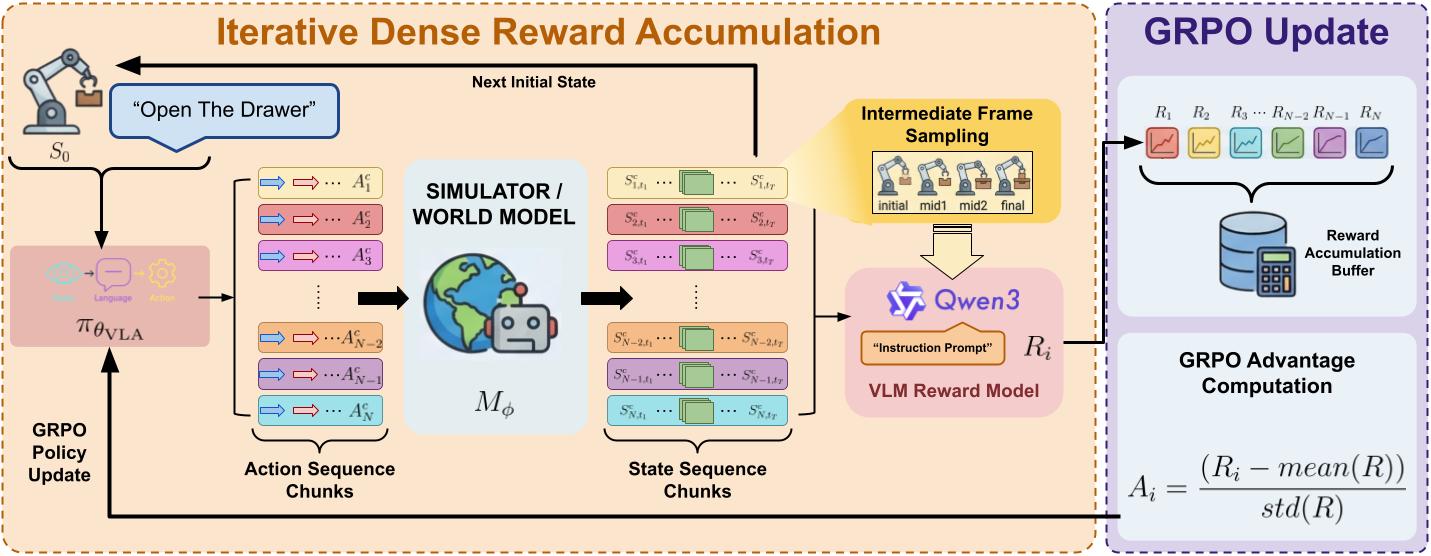

Foundation Models for Physical AI

We work on building safe and reliable foundation models for embodied physical systems. This includes improving the safety and inference-time performance of Vision-Language-Action (VLA) models, safe agentic reasoning for autonomous agents, and bridging large-scale pre-trained models with formal safety constraints for real-world robotic deployment.

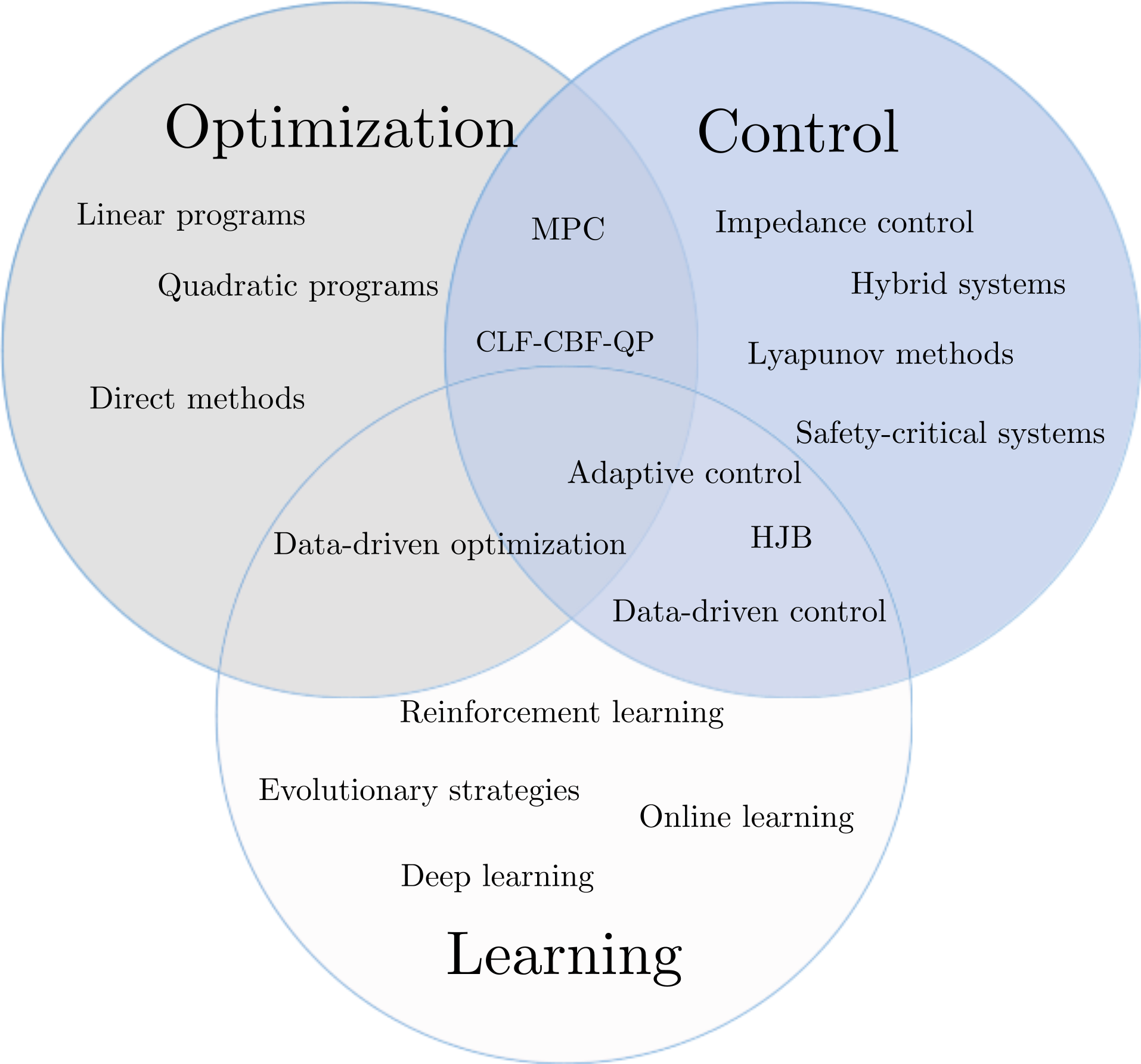

Safe and Optimal Control of Autonomous Systems

We develop physics-informed machine learning frameworks that co-optimize safety and performance for autonomous systems. By formulating the problem as a state-constrained optimal control problem and approximating the solution via neural networks trained with HJB-inspired losses, we achieve scalable controllers with formal safety guarantees for high-dimensional systems — from ground vehicles to manipulators.

Key works: PIML-SOC (ICML 2025) · Robust PIML-SOC (IJRR) · MAD-PINN

Verified Neural Control Barrier Functions

We develop methods to learn and formally verify Neural Control Barrier Functions (NCBFs) that provide rigorous safety certificates for dynamical systems. Our work addresses stochastic environments, conformal prediction-based verification, and vision-based safety — enabling safe controllers that scale beyond hand-designed barrier functions.

Key works: S-NCBF (CDC 2024) · SSPL (CDC 2025) · CP-NCBF

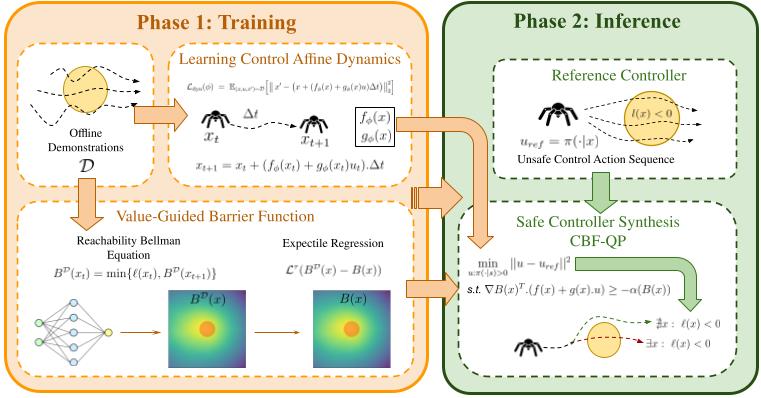

Safe Offline Reinforcement Learning

We develop frameworks for learning safe policies entirely from offline data, without online interaction. Our methods combine value-based safety propagation, control barrier functions, and generative models (flow matching) to synthesize controllers that achieve near-zero safety violations while maintaining strong task performance — critical for deploying RL in the real world.

Key works: V-OCBF (TMLR) · EpiFlow · Safe Flow Q-Learning

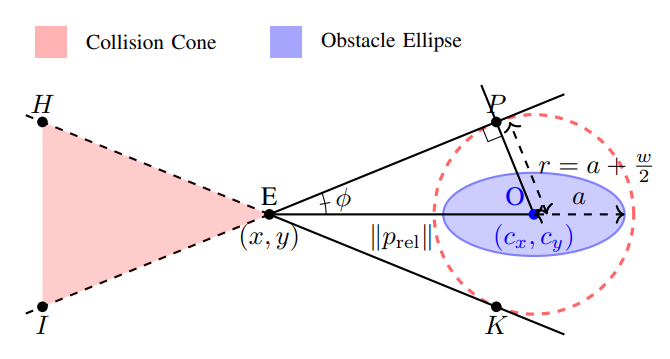

Collision Cone Control Barrier Functions

We introduce a geometric framework for safe navigation based on collision cones and control barrier functions. This line of work provides real-time obstacle avoidance guarantees for UAVs, UGVs, legged robots, and multi-agent systems operating in dynamic, cluttered environments — validated extensively in hardware.

Key works: C3BF (TCST) · C3BF-UAV (ACC 2024) · C3BF-UGV (ACC 2024) · PolyC2BF (ECC 2024)

Invited Talks

- Mar 2025 “A Physics Informed Machine Learning approach for Safe and Optimal Control of Autonomous Systems” , Safe and Intelligent Autonomy Lab, Stanford University (Slides)

- Jul 2024 “Learning Formally Verified Neural Control Barrier Functions in Stochastic Environments” , Cyber Physical Systems Symposium(CyPhySS), Bangalore

- Sept 2023 “A Collision Cone Approach for Control Barrier Functions” , Systems and Controls, IIT Bombay (Slides)

Research Mentoring

I have had the fortune of working with and mentoring some fantastic student collaborators.

Ph.D. Students

- Prakrut Kotecha — Learning Safe Dynamics and Control for Autonomous Systems, IISc Bangalore (Jul 2025 – Present)

Master’s Students

- Pranav Tiwari — Safety Critical Control of Autonomous Systems, IISc Bangalore (Jul 2025 – Present)

- GVS Mothish — Control of Bipedal Robots in Uneven Terrain, IISc Bangalore (Sept 2022 – Jul 2024)

Bachelor’s Students

- Aditya Singh — Safety Critical Control of Autonomous Systems, IIT Patna (2024–Present)

- Shreenabh Agrawal — Safety Critical Control of Autonomous Systems, IISc Bangalore (2024–2025)

- Karthik Rajgopal — Design and Control of Bipedal Robot, BITS Pilani (2022–2024)

- Ravi Kola — Design and Control of Bipedal Robot, GTU (2023–2024)